Appreciation from MooseFS open-source user

Last week we received an e-mail of thanks from one of MooseFS open-source version users and valuable GitHub contributor, Tianon. Tianon expressed his gratitude for …

February 17th, 2023

Read moreDue to growing COVID-19 outbreak, as Software Defined Storage vendor, we would like to help as much as we can during these tough times. We hereby offer license and support for our software, MooseFS Pro, completely free of charge – to all the laboratories and organizations dealing with combating the causes of the pandemic and mitigating its effects. MooseFS Pro helps to build reliable and efficient storage clusters in minutes – and it may be a great help when time matters.

MooseFS Pro is software that allows you to build fast and reliable petabyte file systems on any type of servers. It is used worldwide in many bioinformatics laboratories, computer modelling facilities, research institutes, etc.

We believe that it can also be useful in other cases where constant fight with pandemic is taking place every day, such as hospitals, laboratories, public health institutions, dealing with large amounts of data.

This action is directed to any organization dealing with direct research on viruses, vaccines, pandemic modelling, crisis management etc. We will provide a license for any required cluster size and any required time. If it is necessary, we can help with setting up and and starting the cluster quickly, too.

If you are interested, please do not hesitate to contact us directly at contact@moosefs.com. Please briefly describe your needs and let us know how MooseFS Pro storage cluster will help to fight against the pandemic in your case.

This is what we can do to mitigate COVID-19 crisis.

MooseFS Team

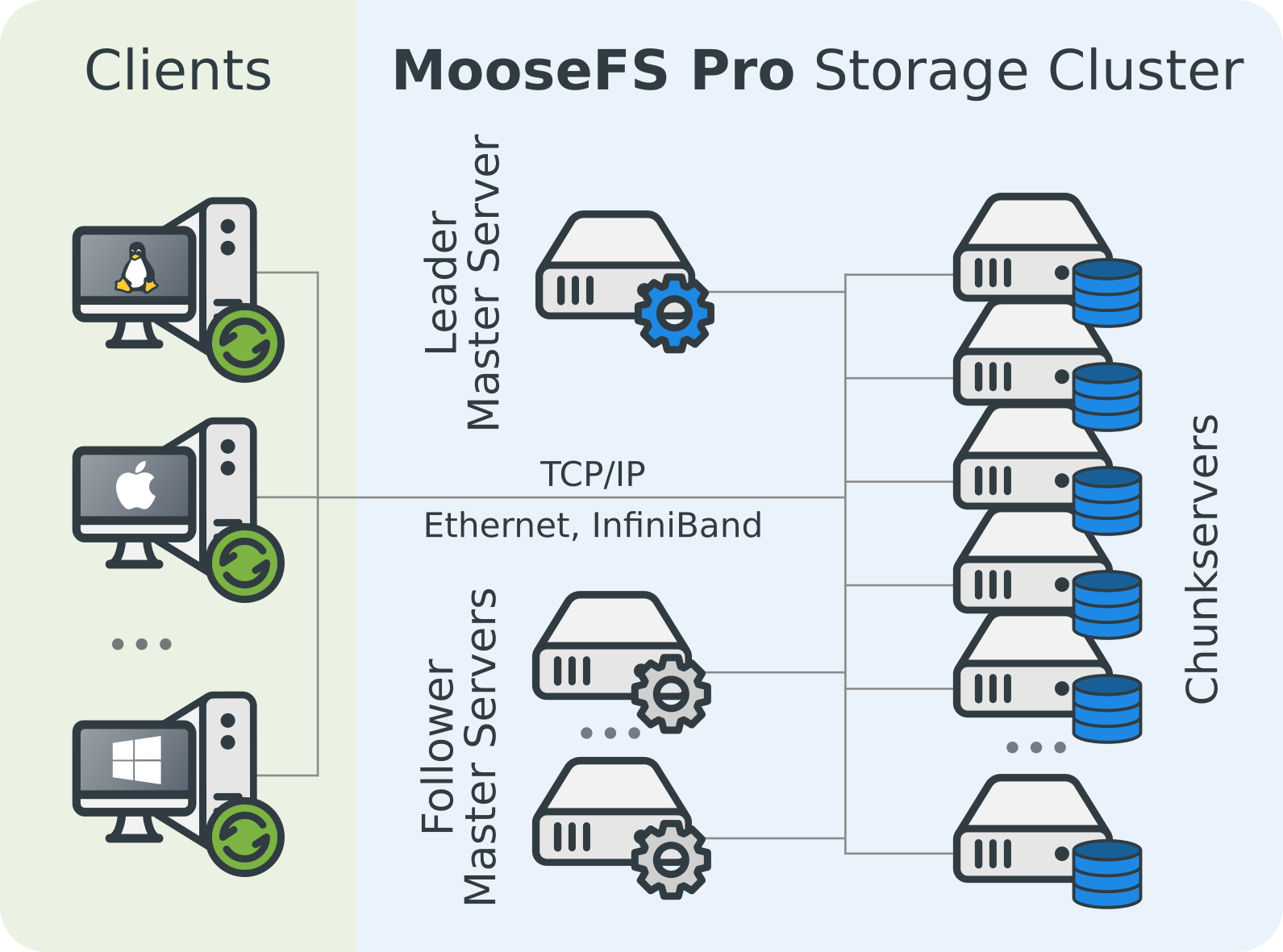

No Single Point of Failure a.k.a SPOF-less configuration. Metadata of the file system is kept in two or more copies on physical redundant servers. User data is redundantly spread across the storage servers in the system.

MooseFS enables users to save a lot of HDD space maintaining the same data redundancy level. In most common scenarios you will save at least 55% of HDD space. Available from MooseFS 4.0 Pro.

Storage can be extended up to 16 exabytes (~16000 petabytes), which allows us to store more than 2 billion files.

Designed to support high performance I/O operations. User data can be read/written simultaneously on many storage nodes, thereby avoiding single central server or single network connection bottlenecks.

MooseFS is a Fault-tolerant, Highly available, Highly performing, Scaling-out, Network distributed file system. It spreads data over several physical commodity servers, which are visible to the user as one virtual disk. It is POSIX compliant and acts like any other Unix-like file system supporting:

It works with all applications that require a standard file system.

Download nowYou can add/remove chunk servers on the fly. But keep in mind that it is not wise to disconnect a chunk server if this server contains the only copy of a chunk in the file system (the CGI monitor will mark these in orange). You can also disconnect (change) an individual hard drive. The scenario for this operation would be:

If you have hotswap disk(s) you should follow these:

If you follow the above steps, work of client computers won't be interrupted and the whole operation won't be noticed by MooseFS users.

MooseFS does not immediately erase files on deletion, to allow you to revert the delete operation. Deleted files are kept in the trash bin for the configured amount of time before they are deleted.

You can configure for how long files are kept in trash and empty the trash manually (to release the space). There are more details in Reference Guide in section "Operations specific for MooseFS".

In short - the time of storing a deleted file can be verified by the mfsgettrashtime command and changed with mfssettrashtime.

Yes, since MooseFS 3.0.

Ability to perform one-node-at-a-time upgrades, hardware replacements and additions, without disruption of service. This feature allows you to maintain hardware platform up-to-date with no downtime.

The assignment of different categories of data to various types of storage media to reduce total storage cost. Hot data can be stored on fast SSD disks and infrequently used data can be moved to cheaper, slower mechanical hard disk drives.

All the system components are redundant and in case of a failure, there is an automatic failover mechanism that is transparent to the user.

Enhanced performance achieved through a dedicated client (mount) components specially designed for Linux, FreeBSD and MacOS systems.

Performs all I/O operations in parallel threads of execution to deliver high performance read/write operations.

Exceptional performance on almost every hardware platform that runs a POSIX compliant Operating System like Linux, Mac OSX or FreeBSD.

+48 22 110 00 63